Software project waste is costing $1bn per day in companies and governments across the world doing work that is completely unnecessary.

Every day software teams around the world are wasting 30- 60% of their of their time (and employer’s time and money) due to inefficient practices. Most of this waste is avoidable. At any point in time, each member of the team may be doing useful work but, the work they are doing could have been avoided. In most cases managers are unaware that this is going on.

This article takes a look at some of main causes of waste in software work. Let us start by looking at the activities of software teams that are wasteful. Everyone who has worked on a software project will have experienced several of these.

Effort and time is often wasted by software teams:

- revisiting poorly designed features from previous sprints,

- understanding and reworking poorly structured code from other developers in previous sprints,

- addressing misunderstandings because the author of the requirement did not ensure that the requirements were clear such that all readers (users, architects, developers, testers) would reach a common understanding,

- doing rework because the author of the requirement misunderstood what was actually needed,

- writing manual or automated tests against requirements that later turn out to be incorrect,

- changing, or eliminating incorrect code because the requirements were poor,

- rework or extra work because a poor data structure was chosen, perhaps because the requirements were inconsistent,

- implementing low priority requirements because a more complete picture of the full requirements set was not being considered or was not available.

- reworking code due to inconsistent and unstructured language use in requirements,

- fixing and retesting bugs that were avoidable if the requirements and designs had been clearer,

- writing inappropriate detailed acceptance criteria of requirements that lack a basic description,

- task-switching caused by rework due to poor requirements or designs. (the opposite of “do it well and do it once”)

- participating in (story point) estimation meetings which are principally devoted to discovering the meaning of the requirements, because the requirements are unclear,

- working on projects that are subsequently cancelled because of the project exceeded budget and timeline, because knowledge of the overall scope was poor or incomplete at the outset

Much is spoken about lean practices by software teams, which is all about eliminating waste. Yet it is rare to find teams that carefully examine the actual source and magnitude of the waste, determine its impact on progress and determine how it might be avoided in future. Most teams are incurring some or many of the above wasteful activities. The accumulated impact averages around 40-50% of all work being done by software teams. And most of this is avoidable.

Wasted Effort in Knowledge Work

Wasted effort in knowledge is common and is substantial. For example, changing code that is already stable is very costly because it, and all features around it, need to be reconsidered, and retested. The retesting is needed to ensure that the change has not introduced some adverse affect. A one line code change can require retesting of thousands of lines of related code. Test driven development (TDD) is a technique that helps to alleviate this problem, but it does not prevent the problem, nor will it catch all possible defects.

On projects with larger teams, the work of one person is dependent on someone else’s previous work. Before making a change to some code, a developer has to understand what his/her predecessor wrote, then work out where else it was being used, then finally they can start to make the desired changes. The same applies to requirements, designs and tests. The pre-change learning can take hours or even days. What seems to be a minor modification can become disproportionately very expensive.

Jumping from one piece of code to the next involves context switching. To do knowledge work well, we need to focus and avoid context switching. Bug fixing involves more context switching than writing the code in the first place. Context switching is completely wasteful. The combination of relearning and context switching goes some way to explaining how a small code change can be extraordinarily expensive. Ideally we want to modify a method as few times as possible beyond the sprint in which it was created.

Do teams measure real waste?

In general, no, they do not. That’s right, $1Bn a day is being wasted by corporates and governments around the world and very few are measuring it. This waste will not magically reduce or disappear by itself, pro-active work is needed.

Root cause analysis can be helpful in identifying where, why and how much waste is being incurred. Before considering a piece of upcoming software work that requires a change or fix to existing code, we should ask ourselves some important questions, here is a non-exhaustive list:

- Could this requirement or change have been foreseen or reasonably anticipated?

- How might we have known about this earlier so that we could have avoided having to change existing code? Could this change have been avoided altogether?

- How much extra effort and extended duration is caused by doing this change now vs if we had addressed it when the code was first written.

- How much extra time and effort is being devoted to regression testing as a result of this change?

- What is the knock-on effect of doing this change now vs if we had done it earlier.

- Does this change introduce new performance or security risks on previously deployed code (does it introduce a bad-fix)?

- Is this change expressed in unambiguous terms such that any reader will understand it.

The Most Common Root Cause

The root cause of most of this waste is poor work early parts of the software lifecycle. Poor requirements work, hurried architecture decisions, sloppy solution options work or design work.

Most software teams rarely consider the actual impact of requirements defects on their productivity and the waste caused by them. Furthermore they may overlook the cost of poor design decisions until long after the design has been implemented. This is get’s labelled as technical debt.

Unclear requirements that are implemented with a poor and inflexible design can lead to extraordinary rework costs. This rework cost can then be made even higher if it causes a delay to other related and unrelated work. In some cases, and quite frequently on larger projects, poor requirements and poor early design decisions can lead to an entire project being scrapped.

Requirements defects discovered late are usually the most expensive type of defect to fix.

About $720Bn is spent p.a. globally on enterprise software work. About half of this is rework which is avoidable and is caused by poor requirements, poor design decisions and the consequences of both. Globally this is about $360Bn p.a. or about $1Bn per day.

What about Agile

Agile does not cure the waste that we have described here. The primary benefit of Agile software development methodologies is a fast feedback loop between users and developers. We see that in many cases, Agile adoption brings with it a drop in the quality and diligence of requirements and other pre-coding work. Organisations are in a hurry to deliver new software based capabilities. Those involved with doing the work, present an “adapt as we go” approach, which seems attractive. If done well, Agile practices do help ensure that the customer gets something that they want, but when accompanied with sloppy pre-coding working the results is a significant levels of rework during the project and increased wasted effort.

Data from thousands of projects shows that there are about as many defects introduced before coding as during coding.[i] This is known as the defect potential. 16% – 35% of all production defects are caused by poor or incomplete requirements.[ii]

Few software teams consider carefully that requirements and design defects are the root cause of so much wasted effort. You cannot develop until you have some idea of the requirements. In other words, development follows requirements. A poorly researched or poorly expressed requirement can lead to an entire team doing days or weeks of wasted work. Often this kind of waste is written off as “learning” or “evolving user requirements” when actually it is neither, it is sub-standard requirements work. There are several reasons why the requirements work might be poor. One reason is that many organisations fail to ensure that the staff who write the requirements are adequately trained and experienced to do high quality requirements work. Another reason is that there is little agreement and consistency across the industry about what constitutes a good requirement.

Other Common Causes of Waste of Software Projects

We have mentioned poor prior work and context switching as leading causes of waste, some other common causes include:

- waste caused by poor leadership,

- waste caused by low skill levels, leading to increased rework and bugs

- waste from following processes that appear to add value but do not,

- not following good processes that do add value but perhaps are un trendy,

- waste in communication overhead due to excessive team size,

Software Requirements Are Often Poor Quality

Software requirements and user stories are often poor quality. Perhaps the author is inadequately skilled or experienced. Perhaps they do not have access to the information that they need. The problem with nearly all requirements is that they are written in unconstrained natural language. Usually the authors lack formal training on writing quality requirements. Unconstrained natural language is not the ideal means of communicating software requirements but it is what we mostly use. Most organisations do not manage requirements quality.

At Scopemaster, we have analysed over 300,000 requirements from hundreds of software projects in different countries and industries. What we have found is that:

- On average a requirement (or user story) consists of 12 words (excluding the acceptance criteria). And on average each word will correspond to 125 coding words, or tokens being written. Thus we can say that a mistake with a single word of a requirement might lead to 125 coding words needing to be rewritten. These numbers should not be used as a basis for estimation but do indicate how important is each word of a requirement.

- On average we see 4-8 problems in each requirement. These problems vary in severity but, unless fixed early, each will, or is likely to, cause a software bug.

Common requirements defects include:

- Not user oriented

- Inconsistent

- Unclear

- Un testable

- Un-sizeable

- Excessive technical content

- Duplicated

- Missing

- Not valuable

- Too verbose

Most readers will interpret a written requirement differently. If the difference in interpretation is non-trivial then it is likely to lead to a software bug. If that bug is not identified until later on, much work can have been done that will need re-doing, in order to fix it. Getting it right first time, or finding defects early, really matters.

Quality Attributes of Good Software Requirements

There are various sources and opinions about what constitutes a good quality requirement. There is great work by the IEEE and they regularly revisit this topic. There is a commonly adopted, although in our view deficient, list of attributes that is frequently promoted by various agile coaches, encapsulated in the INVEST pneumonic.

Here is our list of requirement quality attributes.

Checking each requirement addresses all of the above well, is rather tedious work, but necessary if you want to minimise waste. Thankfully, tools such as ScopeMaster can now do the lion share of this requirements quality assurance for you.

Other forms of Software Project Waste

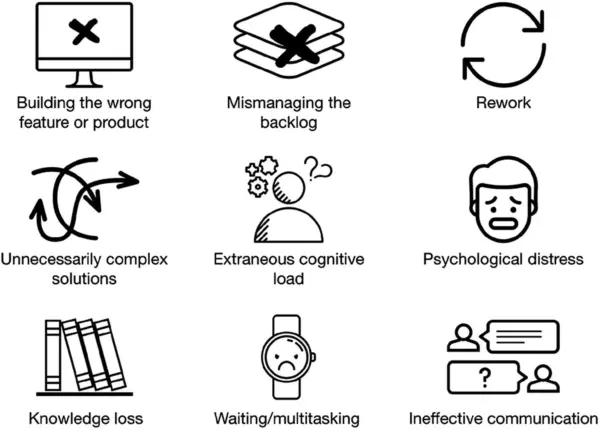

Thanks to Todd Sedano for his very helpful image at the top of this article that shows the common forms of software project waste. We have covered the first three in some detail now as they are the leading root causes of project waste. Let us look at the others:

Unnecessarily complex solutions

Technical debt, complex integrations, mixture of languages, complex business rules and poor software architectures all lead to overly complex solutions, which lead to time wasted in rework, discovering how system are working and ensuring that introducing change does not cause undesired results. i.e Good architecture leads to efficient flexibility.

Extraneous cognitive load

Software project waste is sometimes caused by giving developers and designers additional distractions and constraints. Successful projects will shield developers, testers and designers anything that they do not need to worry about.

Psychological Distress

This cause of waste tends to be less common or caused by external pressures or in some rare cases very poor management.

Knowledge loss

In large or complex systems environments, continuity of personnel helps to retain knowledge. If experts leave it may take weeks or months of learning to catch up to the level of the departing staff.

Multitasking/waiting

Software projects waste time and effort on task switching (a result of mult-tasking). It can often take 20-50 minutes for a developer to get back to where they were before a distraction interrupted a train of thought.

Ineffective Communication

We have largely covered this with poor requirements. But sometimes other project communication is poorly handled, for example if executives fail to give clear guidance on what is important to the business.

Avoiding the Waste

To avoid this waste we just need to better requirements work and better design work. Is it that simple? Yes.

If you write better requirements that communicate clearly and consistently to all readers, and then spend adequate time to ensure that designs options are carefully considered, designs are reviewed and optimised before coding, good things will happen and waste will be minimal.

Rarely is there adequate focus on requirements quality. Furthermore many Agile approaches, frameworks and trends are undermining proven, efficient ways of delivering high quality software first time around. Requirements quality and design quality can be measured, tracked and improved, but rarely are.

Teams Need Help to Solve this Problem

Writing good requirements is not easy, it requires training and experience. Many teams have a product owner who is either a subject matter expert or an experienced BA, they may know the business but not how to write good quality requirements. Here are some reasons why this problem remains largely unaddressed:

1. There is little job reward for challenging the requirements quality

2. If the requirement is poor and you have to build it twice, you get paid more

3. Requirements authors rarely have intimate knowledge of the user need, existing systems and how to write good requirements.

4. Often requirements authors are the developers who write down jargon-rich development tasks, not readily understandable user requirement that can be understood by all.

5. Teams rarely constrain the language of requirements to that which achieves consistently clear common understandings.

6. The team relies on discussion as a means of clearing up misunderstandings. The discussions often go undocumented.

7. There is a wide variability in the opinions of what constitutes good quality of requirements.

2022 and Automation/AI Comes to the Rescue

Tools are emerging that can help with requirements quality assurance (QA). Vendors of requirements management tools are working on new capabilities to help with some aspects of requirements QA. IBM Doors, Jama Connect, Visuresolutions and ScopeMaster are examples. Our own tool ScopeMaster is the most advanced tool available that addresses the problems described in this paper. It automates requirements QA early in the software lifecycle. It is like a static analyser of requirements; it does for requirements what SonarQube does for code.

ScopeMaster and some other tools use Natural Language Processing, and other analytical techniques to interpret and test requirements. ScopeMaster guides the requirements author to improve the quality of the requirements at speeds and thoroughness that has hitherto been impossible. (ScopeMaster will perform on average 100,000 tests on a set of 100 user stories in 4 minutes). ScopeMaster typically finds 2-4 defects per requirement in just a few seconds.

ScopeMaster will find about half of all the latent defects in a set of requirements. The types of defect covering 9 out of the 10 quality attributes shown above. This can help teams raise the quality of a set of requirements to a much higher standard very quickly, helping to alleviate this $360Bn p.a. problem.

Conclusion

If you are involved in software projects in a corporate or government sector, you may be wasting up to 50% of your budget on avoidable work. The root cause of much of this waste is poor requirements and system design work. Recognising that there is a problem, and that this is the likely root cause, is the first step in being able to resolve it. The next step is to introduce a quality improvement initiative that addresses the start of the development lifecycle: objectives, requirements, architecture and design. Start to measure defects in requirements and the impact that they are having on your progress. Recognise defect potentials and introduce initiatives to find and fix those defects as early as possible. Tools such as ScopeMaster are game changers for software certainty.

Written by Colin Hammond, 37 year IT veteran and founder of ScopeMaster.

[i] Defect Sources Defects per function point Requirements defects 0.75 Architecture defects 0.15 Design defects 1.00 Code defects 1.25 Document defects 0.20 Bad fix defects 0.15 Total Defect Potential 3.65

https://www.ifpug.org/wp-content/uploads/2017/04/IYSM.-Thirty-years-of-IFPUG.-Software-Economics-and-Function-Point-Metrics-Capers-Jones.pdf

[ii] Capers Jones and Accenture, January 2021

Economics of Software Quality, by C Jones and Olivier Bonsignour.