Update 2023

This article was originally written in late 2018. Now in 2023 more information is being reported. April 2023 update at Computer Weekly

What we know now is that there were “some issues” i.e bugs that affected different groups of clients in different ways. It took them at least 7 months to resolve them. We can only estimate at the total number of bugs, but it is likely to be in the range of 4-140. We know this because there are at least 4 reported failure scenarios and the most likely number of bugs that can be repaired in a heavy use production system is about 1 per day, regardless of team size.

Cost of Quality Assessment

It cost TSB £32.7m in redress to customers, plus an £18.9m fine and we can estimate about £3.2m technical effort and a further impact on customer service staffing of about £52m (allow 1 call at £10 per customer) . Then there is the opportunity cost of having to resolve this rather than move the bank forward. And finally the bank will have lost some customers over this.

Total estimated cost appears to be in excess of £100m. Which gives us a cost per defect of around £1m per defect not discovered before go live.

How could it been avoided?

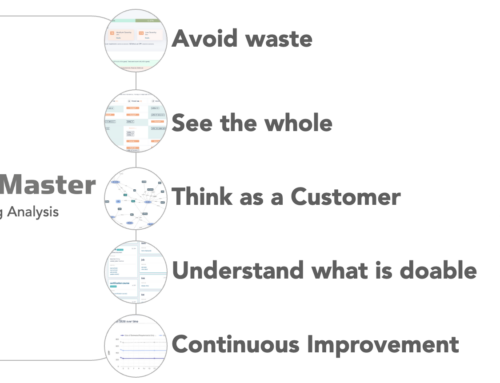

Knowing the defect potential of a software endeavour can inform you of how many defects you need to find before you are ready to go live. It is predictable. Using various techniques that revolve around knowing the functional size of the software, defect potentials, defect risk areas, defect discovery rates, test coverage and more, we can work out what types of testing will be needed and how much testing is enough to achieve satisfactory quality. The point is that terrible situation was both predictable and avoidable. It’s also interesting to look at the career of Carles Abarco on LinkedIn, nowhere does the word quality appear.

What is Adequate Scrutiny in 2023

We can only guess at the conversations that might have taken place:

Exec: “Have you done enough testing?”

Tech: “Yes, here are the hundreds of tests that we did, and they all passed.”

Exec: “Good, so are you confident, it’s ok to go live?”

Tech: “Sure.”

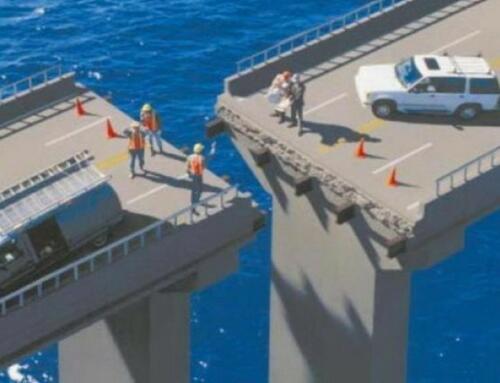

The failure was both predictable and avoidable. It would have probably only taken a couple of months to find and fix those bugs before the migration, no doubt everyone wishes they had spent those two months on solving those problems. I wonder what measures TSB and Sabadell are employing now to ensure that this scenario is not repeated?

Colin Hammond, April 2023

The news of TSB showing incorrect transactions in people’s accounts is evidence of a software project gone wrong due to poor quality work. Why is this and how could it have been avoided? The underlying root cause could come from a range of possibilities, in this article I explore some of the most likely, and the lessons we can learn from them.

Legacy Systems under Pressure

At the core of most banks and large financial institutions is software that was originally written long ago and is has proven to be very reliable. We call these legacy systems and as we demand different ways of accessing our banking data, the underlying code of these legacy systems finds itself working in ways that the original developers never imagined or intended, this can lead to infrequent bugs.

Maintaining Legacy Technologies

Most of these old systems are written in old technologies such as a language called COBOL. Most COBOL developers have retired now and there are very few youngsters who are enthusiastic to learn such dead languages. Consequently there is a shortage of highly skilled developers for these old systems.

Risk Leads to Abstraction

Migrating legacy systems to newer technologies is hard and risky. It can take years to plan and implement. Replacement of a core system can be so potentially disruptive that management avoid doing so altogether. One tactic to allow banks to move forward offering new functions to users, is wrap these old systems using a technique called Abstraction. They are treated as a “black box” and we don’t need to worry about how they work, we just need to be confident of their inputs and outputs. This technique postpones the eventual need to replace these systems.

Architecture and Complexity

As new banking products are created, more and more dependent systems “hang off” the legacy creating an ever more complex picture of how data is moving between these systems. Over-complex systems can be the cause of many bugs. A good IT architecture will help to combat or contain this complexity and it’s associated risk.

Evolved Code

Systems that have been around a long time have often been tinkered with by many programmers over the years. Sometimes there is little knowledge handover from one developer to the next. The new guy doesn’t understand what the last guy did and he is reluctant to change existing code, in case he breaks it. So instead he writes new code to to sit alongside the old code. Both sets of code probably do the same thing, but what happens when the next developer comes along, he can’t be sure which code to work on. This is one of the ways that complexity can build up.

Testing alone is not enough to ensure quality

Studies of thousands of IT projects provide us with evidence that testing alone will not ensure that all bugs have been found. In fact, at best, testing will rarely find more than 85% of bugs. Testing needs to be supplemented by other forms of quality improvement techniques that can identify potential bugs even before you start testing. Some of the most effective are static analysis and formal reviews covering requirements, architecture, design and code.

Test that it doesn’t do what it shouldn’t

Suppose you are doing a transaction on your banking app, the connection is lost and only half the instruction is sent to the bank. How does the bank’s system handle half an instruction? If that system is connected to a legacy system, how does the legacy system handle an incomplete instruction. Whenever we make a system change we always test that the new system does what it is supposed to do. We must also ensure that it doesn’t do what it shouldn’t, this kind of testing tends to get overlooked.

Compressed timetable

No doubt, the work that has taken place by TSB to migrate systems will have had extensive testing. With all such projects though the team will have been under some time pressure to complete their work by a particular date. A compressed timescale is one of the most common causes of IT project failure.

Technical Skills

Coding is creative work, that takes ability and experience. For example, there are many ways of coding the same outcome, one coder can achieve a set of functionality in just one or two lines, another may have to write fifty. On the whole, more compact/terse code tends to be of higher quality. Developer skills vary dramatically, compare a singer who is pitch perfect with another who can’t even carry a tune, they both call themselves “singers”. Developers of low competence can introduce more bugs than fixes or functionality.

Business pressure for new requirements

Management are always under pressure to grow their business, and in some cases can lose sight of the importance of stable, accurate systems when under pressure to deliver new features and capabilities.

Bugs not Predicted so the Business Risk was not Understood

Contrary to many people’s beliefs, bugs in software can be predicted and measured. Using standard software metrics it is possible to know how many bugs you have left in a system before going live. If managers are told how many outstanding defects are yet to be found, they might not approve the decision to go live. It is disappointing to see that very few people use these metrics. They are (like COBOL) unfashionable but they work. They bring considerable certainty to an industry that has fallen in love with “fail fast” and “rapid deployment” over proven metrics.

Conclusion

There are many possible reasons for the recent TSB problems, and I suggest some of the them here. The truth is that replacing legacy systems is more than just an IT responsibility, in some cases it is a matter for the entire survival of the business. Banking customers might be tolerant if their banking app is unavailable for a few hours, but they will not accept incorrect balances or severely delayed transactions. This undermines trust, and without trust, banking customers will go elsewhere. Colin Hammond is an IT project assurance consultant and author of ScopeMaster, a tool for bringing certainty to IT projects.